Can AI Diagnose Disease? The Reality of AI in Medical Diagnostics

Apr, 17 2026

Apr, 17 2026

AI vs. Human Diagnostic Comparison Tool

Click on a medical specialty to see how AI and Human strengths complement each other in a "Co-pilot" model.

| Medical Specialty | Primary AI Strength | Primary Human Strength | Current Role |

|---|---|---|---|

| Dermatology | Pattern Match | Patient History | Screening |

| Radiology | Fatigue-Free | Complex Reasoning | Co-Pilot |

| Genomics | Big Data Process | Phenotype Interpretation | Essential ID |

| Cardiology | Anomaly Detection | Lifestyle Assessment | Early Warning |

Specialty Detail

Details will appear here.

Details will appear here.

Quick Takeaways

- AI excels at pattern recognition in imaging (Radiology, Pathology).

- It is currently a "co-pilot," not a replacement for doctors.

- Accuracy is high for specific tasks but drops when faced with rare cases.

- Ethical concerns regarding data bias and accountability remain unsolved.

How AI Actually "Sees" Disease

To understand if AI can diagnose, we first need to clear up what it's actually doing. It isn't "thinking" like a doctor. Instead, Machine Learning is a branch of AI that uses statistical techniques to allow computers to learn from data without being explicitly programmed. If you feed a system 100,000 images of malignant melanoma and 100,000 images of benign moles, the AI identifies the mathematical difference in pixel patterns that a human eye might miss.

This is where Deep Learning comes in. By using artificial neural networks with many layers, the AI can identify a hierarchy of features. First, it sees a line; then a curve; then a specific texture associated with a tumor. This approach has pushed AI medical diagnosis into the spotlight, particularly in fields where the "data" is visual.

The Heavy Hitters: Where AI Wins

There are certain areas of medicine where AI is already beating humans or, at the very least, keeping pace. The most prominent is Radiology, which is the medical specialty focusing on imaging techniques like X-rays, CT scans, and MRIs to diagnose disease. AI doesn't get tired. A radiologist might be on their twelfth hour of a shift and miss a tiny nodule on a lung scan; a computer doesn't have a "bad day."

In Digital Pathology, the AI can scan thousands of cells in a biopsy slide in seconds. For example, in breast cancer screening, AI can highlight "areas of interest," allowing the pathologist to zoom in on the most suspicious cells rather than hunting for them manually. This cuts down the time to diagnosis and reduces the chance of human error.

| Field | AI Strength | Human Strength | Current Status |

|---|---|---|---|

| Dermatology | Pixel-perfect pattern matching | Contextual history/Patient feel | High accuracy in screenings |

| Radiology | Speed and fatigue-free scanning | Complex clinical reasoning | Standardized co-pilot usage |

| Genomics | Processing billions of base pairs | Interpreting phenotypic expression | Essential for Rare Disease ID |

| Cardiology | EKG anomaly detection | Patient lifestyle assessment | Early warning systems |

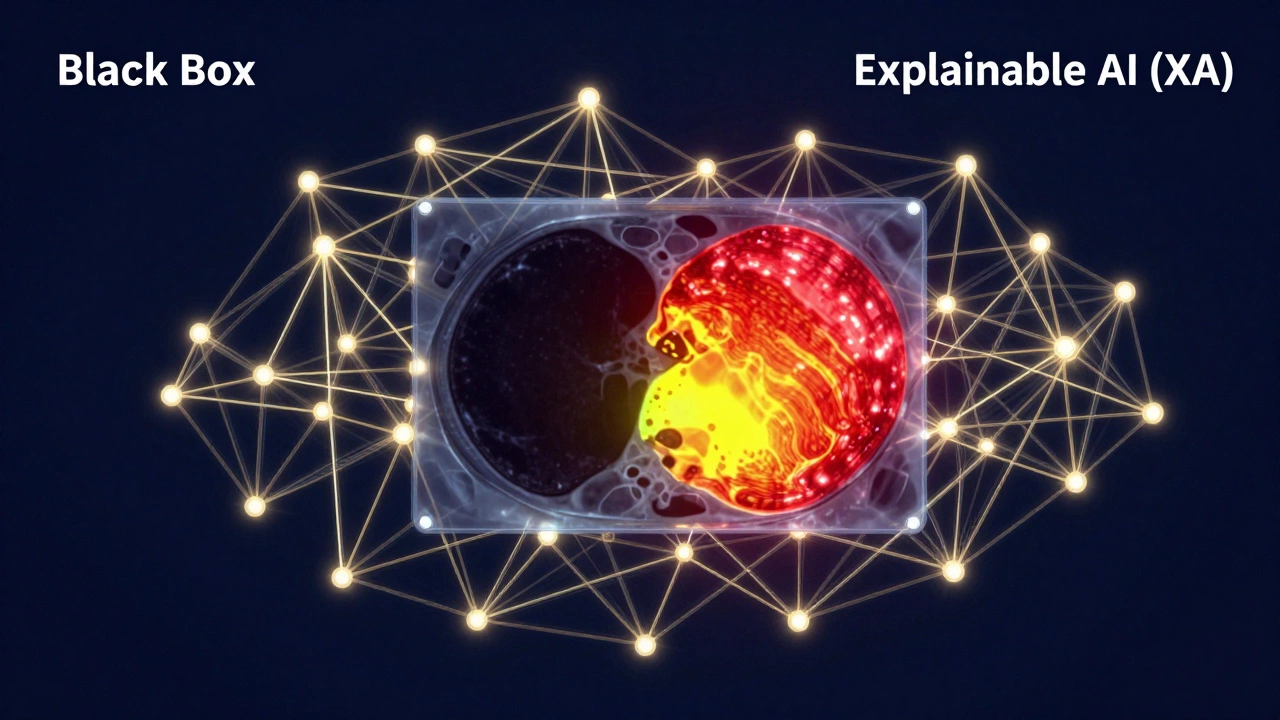

The "Black Box" Problem and the Trust Gap

If a doctor tells you that you have an autoimmune disorder, you can ask them "Why?" and they can explain the biomarkers and symptoms. With many AI systems, we face the "Black Box" problem. The AI arrives at a correct diagnosis, but the developers cannot explain exactly which pixels or data points led to that conclusion. This is a massive hurdle in healthcare. How can a surgeon operate on a patient based on a "trust me" from an algorithm?

This has led to the rise of Explainable AI (XAI, which is a set of processes and methods that allow human users to comprehend and trust the results created by machine learning algorithms. XAI tries to create "heat maps" that show the doctor exactly where the AI saw the abnormality. This transforms the AI from a mysterious oracle into a transparent tool.

The Danger of Biased Data

AI is only as good as the data it eats. If an AI is trained on 50,000 images of skin cancer, but 95% of those images are from fair-skinned patients in Northern Europe, the AI will be dangerously inaccurate when diagnosing a patient with dark skin. This isn't a failure of the math; it's a failure of the data collection. We've already seen cases where algorithms used to predict healthcare needs inadvertently prioritized white patients over Black patients because the AI used "healthcare spend" as a proxy for "sickness," ignoring the fact that systemic barriers make it harder for Black patients to spend money on care.

To fix this, researchers are pushing for diverse datasets. But the reality is that high-quality, labeled medical data is hard to get due to HIPAA and other privacy laws. Balancing patient privacy with the need for diverse training data is the tightrope the industry is currently walking.

Can It Replace the Doctor?

The short answer is no. Diagnosis isn't just about identifying a pattern; it's about clinical judgment. A patient might have a cough and a fever. An AI might see the pattern and suggest pneumonia. But a human doctor knows the patient just returned from a trip to a tropical region or that they have a history of rare allergies. This "contextual intelligence" is something AI currently lacks.

We are seeing a shift toward Augmented Intelligence, where the goal isn't to replace the human, but to enhance them. In this model, the AI does the tedious work-sorting through thousands of images or checking for drug interactions-and the human makes the final call. It's a partnership. The AI provides the evidence, and the doctor provides the wisdom.

Looking Ahead: The Future of Diagnosis

In the next few years, we'll likely see more "ambient diagnostics." Think of wearables that don't just count steps but use AI to detect an irregular heartbeat (arrhythmia) days before a stroke happens. We are moving from reactive medicine (treating you when you're sick) to proactive medicine (stopping you from getting sick).

We will also see a surge in multimodal AI, which combines different types of data. Instead of just looking at an X-ray, the AI will simultaneously analyze your genetic sequence, your blood pressure trends from your smartwatch, and your electronic health records. This holistic view will make diagnoses far more accurate than any single-test approach.

Is AI diagnosis 100% accurate?

No. While AI can outperform humans in specific tasks-like spotting early-stage glaucoma in retinal scans-it can still produce "false positives" (saying you're sick when you aren't) and "false negatives" (missing a disease). AI is a tool to assist, not a definitive verdict.

Who is responsible if an AI makes a wrong diagnosis?

This is one of the biggest legal gray areas today. Generally, the "physician in the loop" is held responsible. Since AI is currently marketed as a decision-support tool, the final signature on the diagnosis belongs to the human doctor, meaning they carry the legal liability.

Will I be able to diagnose myself with AI at home?

To some extent, yes. Apps that analyze skin spots or track heart rhythms are already here. However, without clinical context and a physical exam, these tools are better for "triage" (deciding if you need to see a doctor) than for final diagnosis.

Does AI use my private medical data to learn?

Most medical AI is trained on "de-identified" data, meaning your name and ID are removed. However, there is an ongoing debate about "re-identification" risks and whether patients should be compensated when their data helps build a profitable AI tool.

Which diseases is AI best at detecting?

AI shines in image-heavy specialties. This includes skin cancers (dermatology), lung nodules and fractures (radiology), diabetic retinopathy (ophthalmology), and certain types of leukemia (hematopathology).

Next Steps for Patients and Providers

If you are a patient, the best way to handle AI in your care is to ask your doctor: "Was an AI tool used to help with this diagnosis, and how was that result verified?" Understanding the tool used can help you understand the limitations of the result.

For providers, the goal should be "AI literacy." You don't need to know how to code a neural network, but you do need to know how to spot a biased result and how to use XAI heat maps to validate the machine's findings. The future of medicine isn't a choice between a human and a machine-it's the integration of both.